Stereolabs - ZED 2/2i

ZED 2/2i cameras by Stereolabs are designed to enhance computer vision applications by providing accurate depth information. They feature dual lenses that capture high-resolution images and generate precise depth maps. These cameras enable accurate object detection and recognition. Their simultaneous color and depth capabilities make them versatile for AI-driven tasks, such as autonomous navigation, robotics, and augmented reality. ZED cameras offer CUDA acceleration, leveraging the power of NVIDIA GPUs to accelerate depth computation and object detection, resulting in faster and more efficient processing of 3D vision tasks. Easy to integrate and widely used by professionals, ZED cameras are a go-to choice for those seeking advanced 3D vision and object detection capabilities.

Article is dedicated to: ZED, ZED Mini, ZED2 and ZED2i. If you are seeking instruction for ZED X, check out the ZED X and Nvidia Jetson Orin Nano article.

Getting started

To run the device, you must meet certain requirements listed below:

Software

Hardware

- Graphics Card: NVIDIA GPU with Compute Capabilities > 6.1 or Jetson embedded GPU is required *

- Connectivity: USB 3.0

* While it's possible to use the ZED Camera without an NVIDIA GPU, it's essential to note that the camera's capabilities are limited in this scenario. You can still access image acquisition from the camera by exploring the ZED CPU Demo.

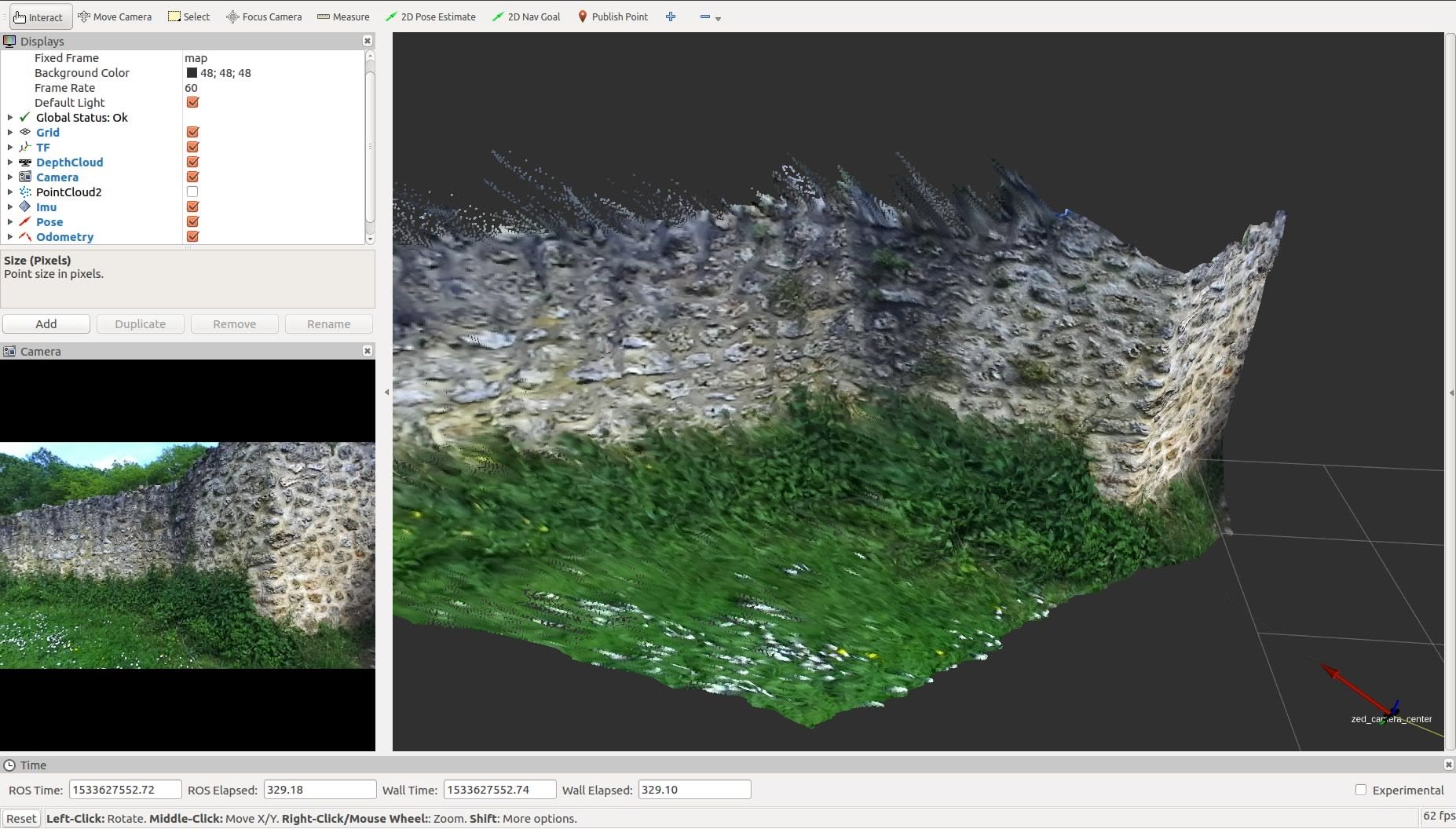

Demo

In this demo, we'll walk you through using the ZED camera with ROS 2 via a Docker image. You'll also learn how to visualize data, including image previews and point clouds, using RViz. Demo based on zed-ros2-wrapper repository.

ROSbot XL with NVIDIA Jetson Nano and ZED 2 camera.

Start guide

1. Plugin the device

For the best performance please use USB 3.0 port, depend of the camera model. Then use lsusb command to check if the device is visible.

2. Clone repository

git clone https://github.com/husarion/zed-docker.git

cd zed-docker/demo

3. Select your appropriate Docker image

export ZED_IMAGE=<zed_image>

Replace <zed_image> with one of the following images, depending on the type of computer you are working on:

| ZED Image | Description |

|---|---|

husarion/zed-desktop:humble | for desktop platform with CUDA 11.7 |

husarion/zed-jetson:foxy | for Jetson platform with Jetson Linux 35.3.1 |

4. Select the appropriate camera model

export CAMERA_MODEL=<camera_model>

Replace <camera_model> with appropriate camera model from below table.

| Product Name | Camera Model |

|---|---|

| ZED | zed |

| ZED Mini | zedm |

| ZED 2 | zed2 |

| ZED 2i | zed2i |

5. Run compose.yaml

xhost local:root

docker compose up

First run of ROS 2 Docker images downloads configuration files and optimize camera. This may take several minutes.

Result

Now you should see a point cloud that defines the position of objects in 3d space, and when you move the camera you should also be able to observe the trajectories of the camera movement.

ROS API

The full API of the robot can be found in the official documentation of the device.

Summary

The depth camera is increasingly the basis of many modern robotic projects. ZED cameras are one of the first depth cameras that use CUDA cores, which allows for such high accuracy while maintaining a large number of frames per second. In addition, ZED officially supports ROS 2 and provides more and more effective solutions.

Need help with this article or experiencing issues with software or hardware? 🤔

- Feel free to share your thoughts and questions on our Community Forum. 💬

- To contact service support, please use our dedicated Issue Form. 📝

- Alternatively, you can also contact our support team directly at: support@husarion.com. 📧