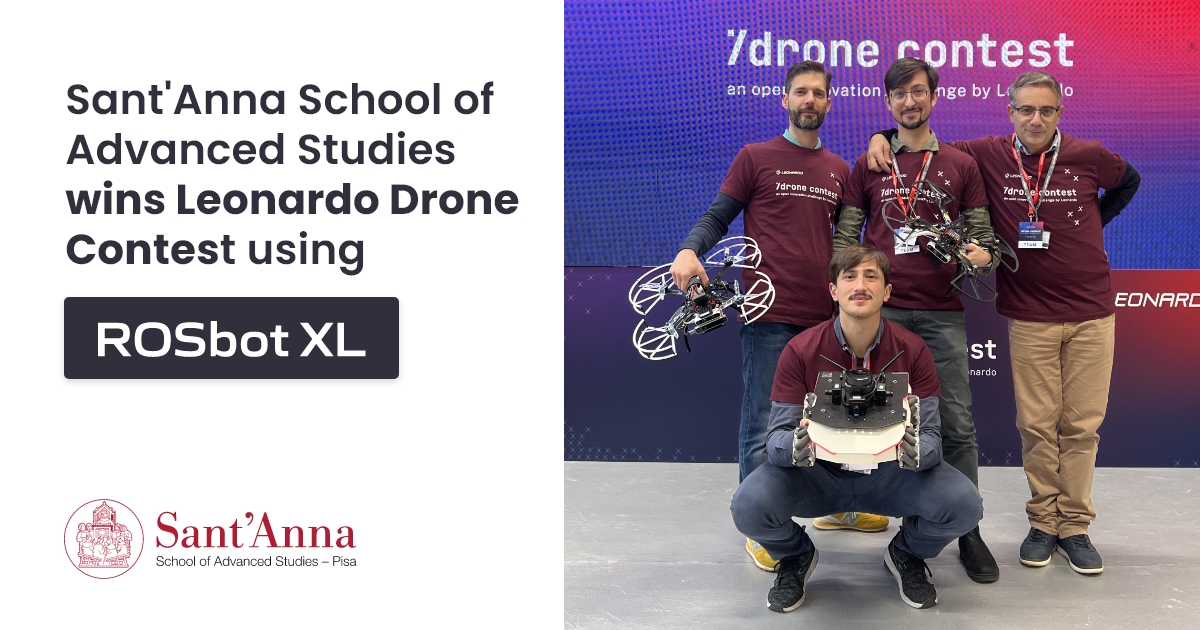

Sant'Anna School of Advanced Studies wins Leonardo Drone Contest using ROSbot XL

Unmanned Aerial Vehicles (UAVs), also known as drones, are used nowadays in a wide variety of applications, exploring zones affected by natural disasters, surveilling, or transporting packages in urban areas. While drones provide opportunities previously unavailable, such as the ability to easily traverse rough terrain, quickly and efficiently explore unknown areas, and possess excellent communication capabilities, they also come with their own limitations. UAVs have a reduced flight time due to low battery capacity and cannot carry heavy payloads, including more powerful computers, heavy sensors, or manipulators.

These constraints can be overcome by developing cooperation between drones and Unmanned Ground Vehicles (UGVs), which offer higher payload and battery capacity. Simultaneously, UGVs can benefit from drones' greater range of sensors and higher traversability of difficult terrain. In scenarios where the drone and mobile robot cooperate to map and navigate unknown terrain, there is potential for achieving much higher efficiency in specific tasks, creating a whole new realm of possible applications.

Yet solving the problem of effective cooperation between the drone and ground mobile robot is not a piece of cake. This challenge is certainly well understood by the team from the Sant'Anna School of Advanced Studies, who recently won the Leonardo Drone Contest using ROSbot XL and their own drone. To achieve this, they had to develop a strategy for joint exploration and navigation that provided the best chance of finding all tags localized in a GNSS-denied and partially unknown environment, prepared by the contest organizers.

One of the critical decisions they had to make was choosing the right robotic platform that would allow them to easily implement their strategy, offering ease of integration with additional sensors and open-source software, laying a solid foundation for developing localization and navigation algorithms.

Wondering what assumptions stood behind their winning strategy? Keep reading to learn about the approach they adopted to develop the most efficient cooperation algorithms and the benefits of using ROSbot XL for the task.

Three cooperative missions in GNSS-denied areas

Before delving deeper into the technical details of the project, let’s take a closer look at the challenges the team had to face.

The Leonardo Drone Contest is a yearly inter-university competition designed and launched by Leonardo, a global industrial group that builds technological capabilities in aerospace, defense & security, trusted by partners from governments, defense agencies, institutions and enterprises. This year, Leonardo supports teams from seven Italian universities participating in the challenge with the aim of encouraging the development of AI applied to Uncrewed Systems and fostering the creation of an innovation ecosystem that involves and brings together the capabilities of companies and universities.

To tackle the tasks, each team is required to use three platforms: one Unmanned Aerial Vehicle, one Unmanned Ground Vehicle, and one Pan Tilt Zoom (PTZ) fixed camera. Teams have complete freedom in choosing the platforms and the collaboration strategy they wish to implement. To prompt participants to develop vision-based localization algorithms, the rules forbid mounting LIDAR on the drone, while simultaneously permitting its use on the mobile robot, leading to a greater reliance on the localization algorithm of the UGV. Another requirement imposed upon the teams is to use ROS 2 and Docker as a software stack - and the establishment of these guidelines is not without reason. As one of the competition organizers states:

"Mastering [these tools] will set you up for a stellar future in robotics and drones!"

The challenge itself consists of three 25-minute missions that have to be performed autonomously in partially unknown and GNSS-denied areas. During each mission, the robotics team must complete two independent tasks.

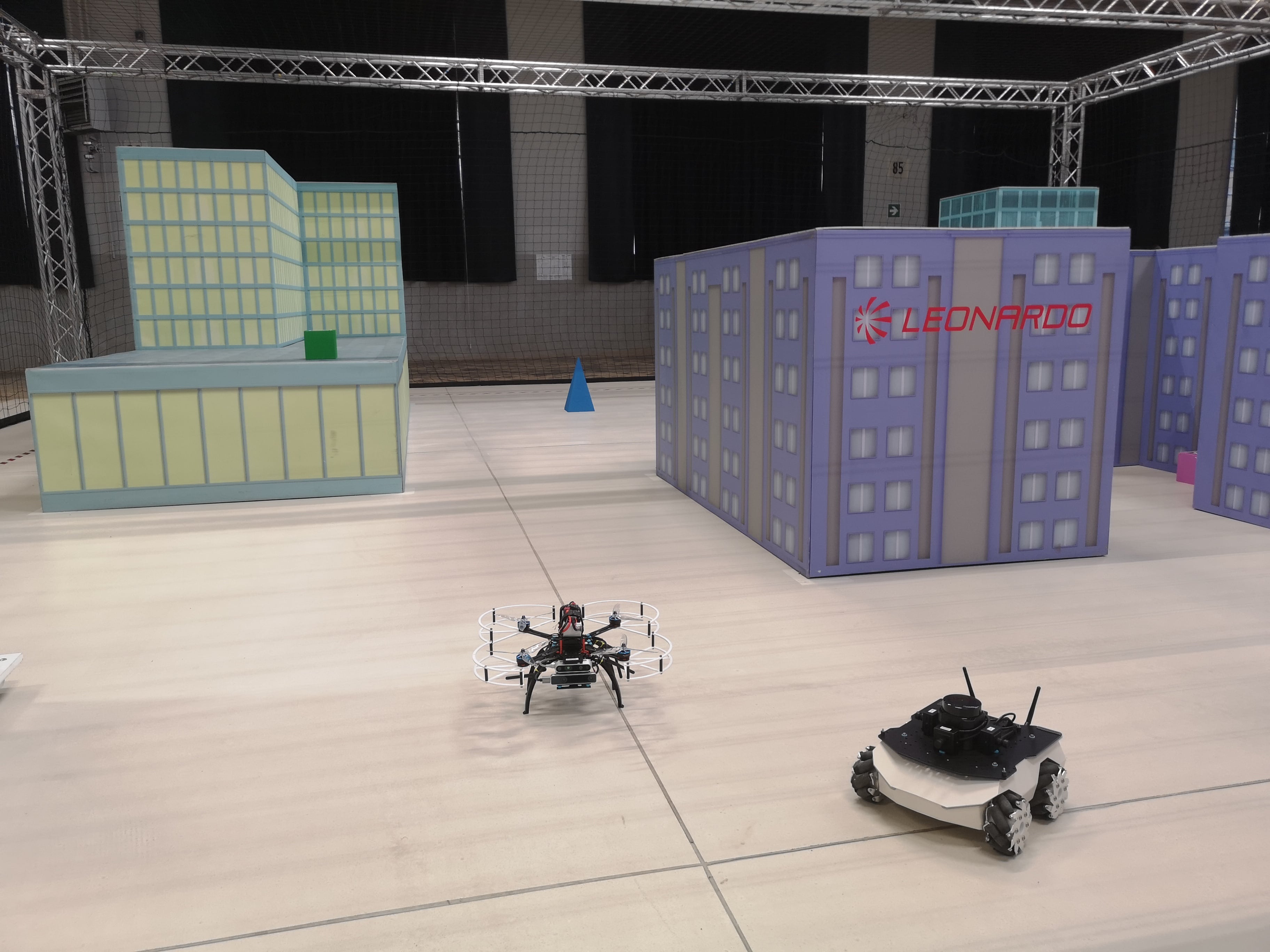

ROSbot XL and drone in a competition area

For the first task, the three agents (mobile robot, drone and the PTZ camera) must detect the targets (ArUco markers) belonging to a list provided to the team. The markers are placed around the area in such a way that some can be seen exclusively by the drone, others are achievable only by the ground robot, and others are visible to all three agents, including the PTZ camera. The optimal strategy should allow the cooperating agents to find all the targets and localize them on the map with sufficient precision, so they can provide coordinates for each marker.

The second task involves detecting an ArUco marker that does not belong to the provided list and taking a picture containing the aerial drone, the ground robot and the detected marker with the PTZ camera.

Each team can make several attempts to complete the mission, every time resetting any previous knowledge of marker locations and maps that the robots may have gained. When the mission time expires, the team must send their best result to the organizers, who calculate their score as the sum of the results of the two tasks.

How to design a winning algorithm

The efficiency of the strategy for robot-drone cooperation in an unknown environment is determined by a series of decisions. Should the robots start exploring the area at the same moment, building a map of the area together? Or maybe a better strategy would be to create a map using one of the robots and then share it with the other robot? What sensors should be mounted on both robots and in what positions? What is the best exploration strategy that will maximize the chance of finding all targets?

All these questions were faced by the team from the Institute of Mechanical Intelligence at the Sant'Anna School of Advanced Studies which consisted of Massimo Teppati Losè and Michael Mugnai (Ph.D. Students), Massimo Satler (Ph.D.) and Prof. Carlo Alberto Avizzano.

Michael Mugnai and Massimo Teppati Losè during the Leonardo Drone Contest

To understand their approach, it’s best to view the strategy for finding the targets as consisting of three main components: localization, navigation and exploration.

Localization is the part of the algorithm that provides information about where exactly the robot is in space. As mentioned earlier, the competition rules prohibited participants from mounting LIDAR on a drone. That's why the team from Sant’Anna School based their localization strategy mostly on the algorithms running on ROSbot XL equipped with LIDAR, which provided information about the robot’s and markers' localization with greater precision than vision-based localization algorithms implemented on a drone.

The second important component was choosing the navigation strategy that would enable both robots to move from point A to point B while avoiding obstacles on the way. Similar to the localization strategy, the team decided to rely mainly on the ground robot for gathering information required to navigate the area. ROSbot XL started autonomously exploring and mapping the environment using slam_toolbox and nav2 packages right at the beginning of the mission. Then, it shared the created 2D grid map with the drone, which took off later on, using the vision-based algorithms to localize itself on the map and navigate the environment.

ROSbot XL exploring the area - RViz recording

Finally, to find as many ArUco markers as possible, both robots had their own exploration strategy, defining where they would go first to explore the environment and look for the markers. On ROSbot XL, the team decided to implement the Frontier Exploration Algorithm. Additionally, to detect markers faster and with greater precision, the team took advantage of ROSbot’s omnidirectional wheels. Thanks to placing four monocular cameras around the LIDAR, they eliminated any need for angular movements of the robot that could cause undesired blur effects, thus preventing the detection of markers on the image from the camera. This clever idea certainly paid off, as the team not only won the competition but also detected the largest number of ground markers, available only to the UGV.

ROSbot XL - an affordable, ROS 2-native mobile platform

Designing an efficient cooperation strategy wasn’t the only condition for achieving a high score. To excel in the competition, the team had to pair it with the right platform that would enable easy implementation of the strategy and smooth integration with all necessary components, as well as ensure high reliability during the task.

Why did their choice fall on ROSbot XL?

One of the reasons was the competition’s requirement to program the robots in ROS 2. As a ROS 2-native robot with available open-source Docker images for mapping, navigation, and more, ROSbot XL was certainly worth a shot.

“I really appreciate the quality of the code that the Husarion team has made open source, both for the low level and the high level. This allowed me to develop code for the mission, having a solid hardware and software base on the mechatronic side of the low-level”, says Massimo Teppati Losè, the member of the team.

At the same time, ROSbot XL offered ease of integration with various components thanks to the universal mounting plate and modular electronic design. The team had no issues integrating it with a LIDAR and four monocular cameras, while putting inside Intel NUC, providing them with sufficient computing power. With the option of replacing regular wheels with mecanum ones and ROSbot’s compact size, the robot could move through an unstructured environment in an agile way, eliminating the need for angular rotation, which proved to be so efficient for the exploration strategy.

Given all these advantages, ROSbot XL came at a moderate price, enabling the team to allocate the remaining budget, limited by the challenge rules, for other expenses such as additional sensors and components for their drone.

It’s not the end!

This year’s competition is over, but the team is already preparing for even more challenging scenarios. Among other plans, they intend to enhance ROSbot XL by incorporating stereo cameras and upgrading the onboard computer. Additionally, they aim to expand their cooperation system with an additional drone and work on developing cooperative SLAM for the three-agent system.

If you think ROSbot XL may prove equally useful in your use case, don’t hesitate to see it in our store, check out its manual or contact us with any questions you may have at contact@husarion.com.

The project was conducted by Ph.D. Students Massimo Teppati Losè and Michael Mugnai, under the supervision of Ph.D. Massimo Satler and Prof. Carlo Alberto Avizzano, from Intelligent Automation Systems research group, Institute of Mechanical Intelligence at the Sant'Anna School of Advanced Studies.